When I talk to other startup data scientists, most of their projects started like this: an executive says “we have a ton of data, it must be valuable,” a team is built with the vague goal of extracting nuggets of wisdom, historic data is pulled from disparate systems, then cleansed and normalized with great effort, and eventually some signal is found among the noise.

This is the exact wrong way to use AI. The power of AI is not in retrospective analysis, but in altering the decisions that need to be made right now.

In our case - the emergency room - it’s valuable to know that nurses are overly conservative about 30% of the time when determining patient severity. Management could, after all, have a word with those personnel with the greatest variance. Healthcare spends enormous sums of money generating these types of “reports” on provider decision-making and clinical performance. Unfortunately, this type of solution is reactionary and slow. The reports come out at the end of the month, lack granularity and clinical context, and put a large administrative burden on Emergency Department Directors.

A far better solution would simply be to help nurses make more informed decisions at the time a decision needs to be made. And the only way to realistically do this is through software that uses modern AI.

In other words, AI should not be in the background. It should be part of the core workflow itself.

A history of real time algorithms

A large part of my career has involved applying real time algorithms to a business need. When I started Mint.com, my initial motivation was to stop spending an hour each weekend manually categorizing transactions in Quicken. Why didn’t Quicken (at the time) know that “Whole Foods” was groceries? Or that the “Jolly Roger, Providence RI” was a cafe? A Google search knew.

Ultimately the solution came from correlating business name fragments, phone numbers, and locations with a database of yellow page listings. Once we knew the category of spending - instantly, as transactions were streaming in - we could send alerts for spending. The resulting push notifications and “budget exceeded” emails became essential to driving usage on Mint. We gave people the data they needed to make money decisions that day, not later in a quarterly or monthly report.

At Fountain - which connected users to experts over video chat - our core algorithm was formed by analyzing millions of resumes we found online. If someone had a title of “UX Designer” and then listed “Photoshop” as a skill, we knew the two were related. As a customer typed a question, we would pick out these keywords, trace them through the graph of human expertise, and contact the relevant expert in less than a second.

Vital, while in a completely different industry, follows this real time trend. Any time patient information changes - lab values, vital signs or even free-text nurses notes - a dozen machine learning predictions update, all in around 200 milliseconds. Our algorithms are meant to help shape decisions as they are being made.

An EHR for computable health

In Vital’s emergency room EHR, all healthcare data is addressable. Just like specifying a location using a street address and ZIP code, one can access data like “chart.vitals.systolic_bp” or “labs.troponin” or “patient.age” without having to be a programmer.

Machine learning then becomes simple. Algorithms “subscribe” to these clinical variables, and Vital issues a command to recompute a prediction on any relevant change.

Numeric values are automatically normalized, and free-text “vectorized”. The last is especially powerful: no other health platform we know of is able to comprehend and utilize the unstructured free-text of doctor and nurse notes.

Text vectorization is an advanced natural language processing (NLP) technique that’s only a few years old. Pioneered by Google (see "Distributed Representations of Sentences and Documents" by Le, Mikolov), it recognizes the similarity between words and even notes of variable length. If clinical notes contain sentence fragments like “we gave ibuprofen for the pain” or “pain was alleviated with aspirin” or “the pain medication naproxen was used,” the words ibuprofen, aspirin, naproxen end up with similar vector representations. That’s because they are used in similar contexts: medications to relieve “pain”.

Text vectorization, data normalization and “watching” for patient changes are built into the core of Vital’s new emergency EHR platform. That makes it easy to develop and serve real time machine learning models: simply list the variables needed for a prediction, supply training data as a CSV, and specify a network architecture (anything from logistic regression, to deep or recurrent neural networks).

Not only are we able to take data into this novel system, but we can examine the contribution of each factor to the overall outcome - a system that gives insight into the proverbial black box of AI.

As a result, Vital has been able to develop a number of machine learning algorithms for it’s platform in short order:

Patient admit or discharge

Fractional emergency severity index (ESI)

30-day readmit

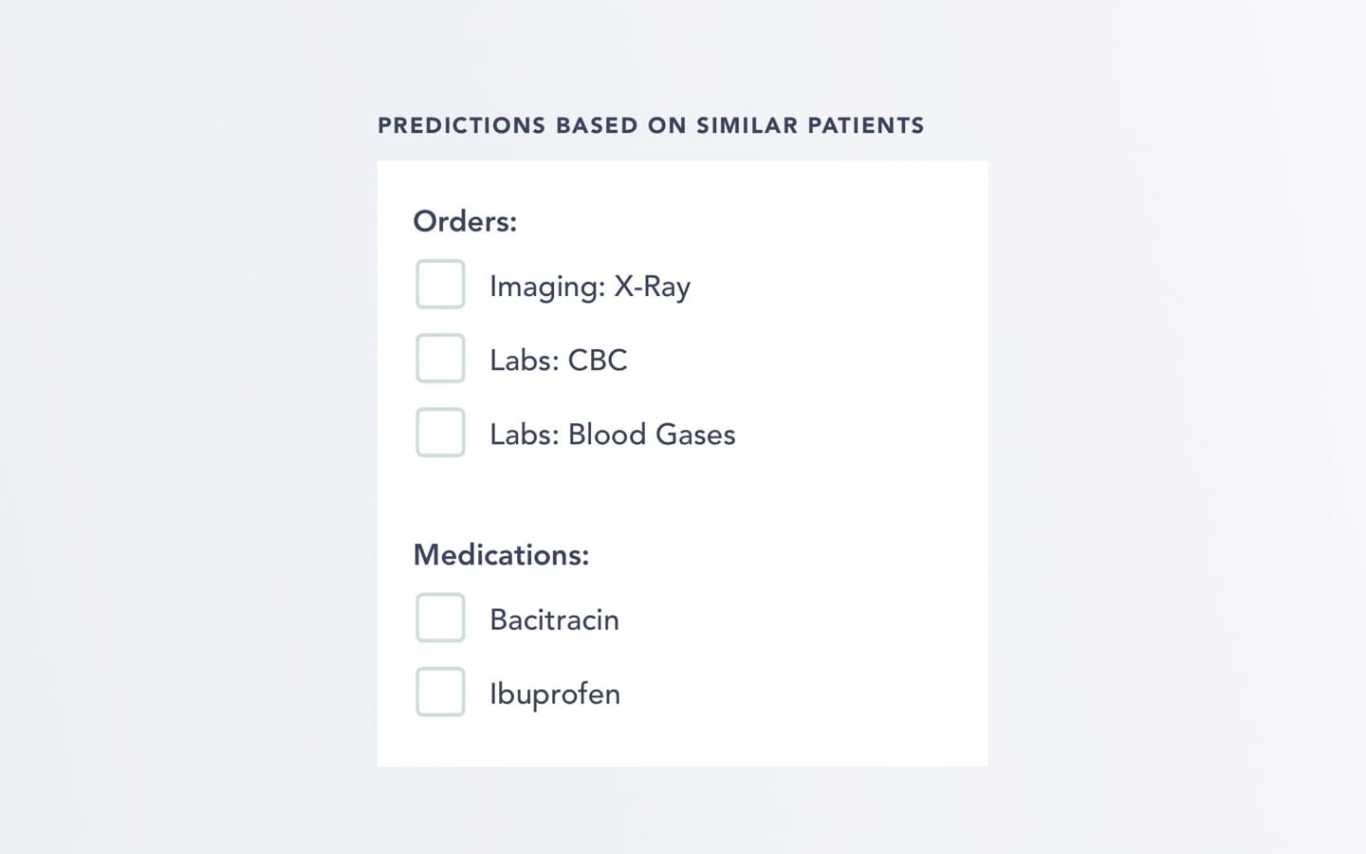

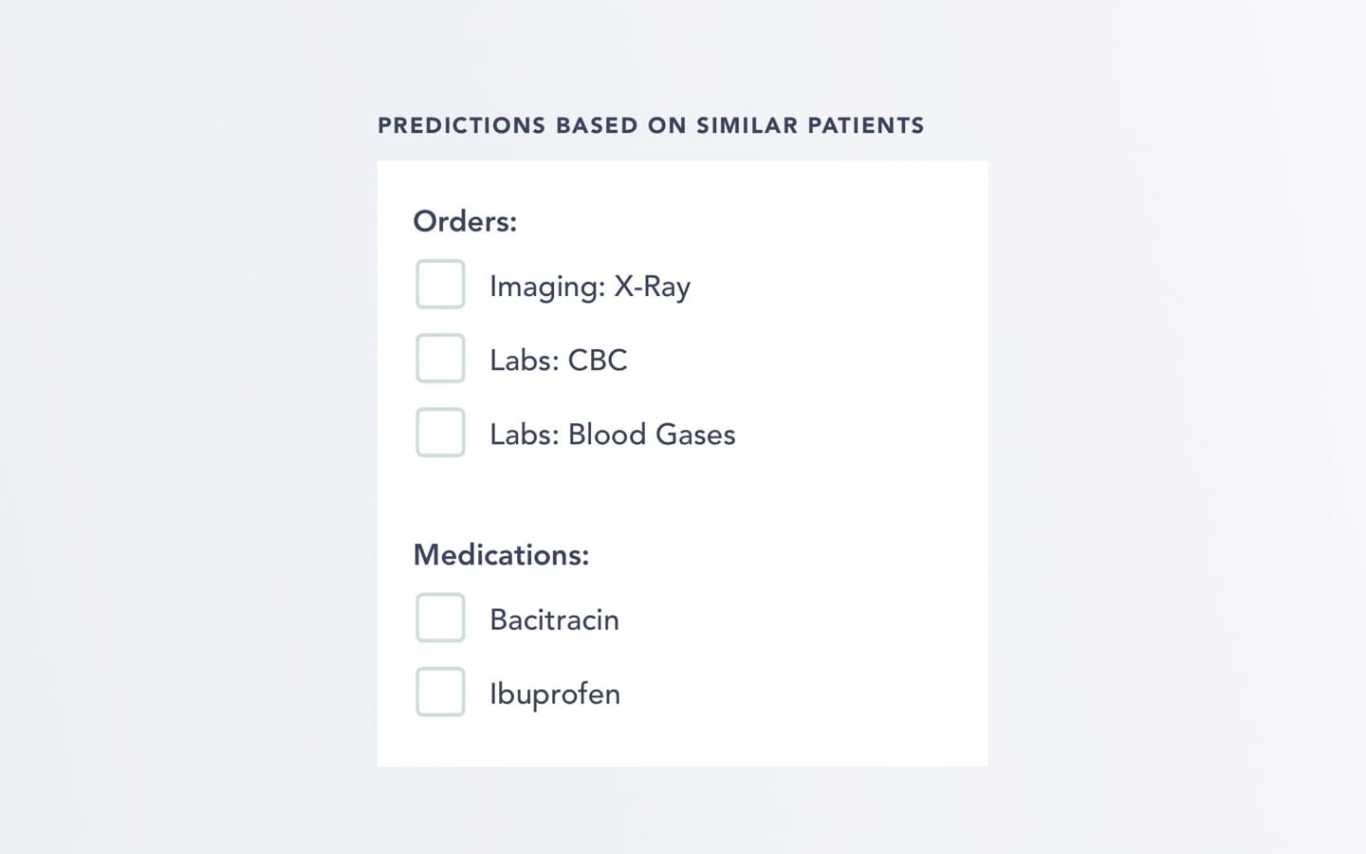

Expected labs, imaging, procedures, medications

Beyond Vital’s own predictive models, any researcher who develops an algorithm for say sepsis prediction could deploy it using Vital. We simply need a list of clinical inputs (e.g. “chart.vitals”) and either training data, or a pre-trained network from Torch, Tensorflow, or Deep Learning 4J.

While not available just yet, we hope that one day this may allow an “app store” for real-time health algorithms. The last decade has produced some amazing research results in the realm of AI + health. It’s time there existed a platform to actually put them into practice.

We strongly believe in well-tested, peer reviewed algorithms to lighten the load of the final decision makers: doctors and nurses. We are committed to pioneering the future of AI in healthcare. We are Vital.